Unzipping those revealed the JSONL files. In the sample data I used for, unzipping the downloaded TAR file gave me a date folder, and in that date folder were hundreds of gz ZIP files. The rationale was to separate importing and parsing from making these selections, as we’re not going to want to repeat the time-consuming first part while we’re tweaking and modifying the second part. The second reads in that file, selects the elements and values that we want into a list format, and writes those out to a CSV. I wrote two scripts: the first reads in and aggregates all the tweets from the JSONL files, parses them into a Python dictionary, and writes out the geo-located records into regular JSON. See GitHub for the full scripts – I’ll just add some snippets here for illustration. These are JSON files where each JSON record has been collapsed into one line. Unzip those, and you have tons of JSON Line files. Unzipping it gives you a folder for that data named for the date, with hundreds of gz ZIP files. I went into the November 2022 collection and downloaded the file for Nov 1st. The file I downloaded from IA for one day had over 4 million tweets, so that’s about 1% of all tweets. Thanks to the internet, it’s easy to find statistics but hard to find reliable ones – this one, credible looking source (the GDELT Project) suggests that there are between 400 and 500 million tweets a day in recent years. The IA describes the Stream Grab as the “spritzer” version of Twitter grabs (as opposed to a sprinkler or garden hose). I didn’t investigate this latter one, because I wasn’t sure if it provided the actual tweets or just metadata about them, while the older project definitely did. This project is no longer active, but there’s a newer one called the Twitter Archiving Project which has data from 2017 to now. Their Twitter Stream Grab consists of monthly collections of daily files for the past few years, from 2016 to 2022. The application keys, which functioned for the last 7 years, have been rescinded by Twitter.”įortunately, there is the Internet Archive, which has been working to preserve pieces of the internet for posterity for several decades. “Twitter’s changes to their API which greatly reduce the amount of read-only access means that the Hydrator is no longer a useful application. You can search and get IDs to the tweets, using their Hydrator application, which you can use in turn to get the actual tweets.

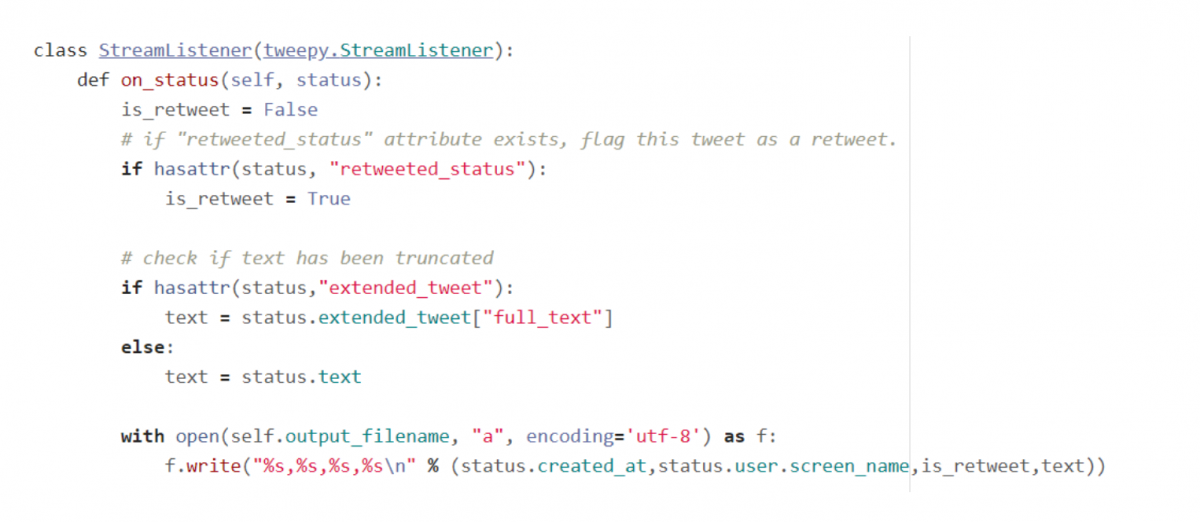

But alas, there are rules to follow, and to comply with Twitter’s license agreement Harvard and these institutions can’t directly share the raw tweets with anyone outside their institutions. They are also affiliated with a much larger project called DocNow with other schools that have different tweet archives. They have an archive of geolocated tweets in their dataverse repository, and another one for political tweets. But I thought – surely, someone else has scraped tons of tweets for academic research purposes and has archived them somewhere – could we just access those? Indeed, the folks at Harvard have. You can register under Twitter’s new API policy and get access to a paltry number of records. Many of these projects rely on third party modules that are deprecated or dodgy (or both), and even if you can escape from dependency hell and get everything working, the changed policies rendered them moot. Surely there must be another solution – there are millions of posts on the internet that show how easy it is to grab tweets via R or Python! Alas, we tried several alternatives to no avail. But the graduate student I was helping last week and I discovered that both the Twint and TAGS tools no longer function due to changes in Twitter’s developer policies. When such questions turn up from students, I’ve always turned to the great Web Scraping Toolkit developed by our library’s Center for Digital Scholarship. Social media data is not my forte, as I specialize in working with official government datasets. What I’m presenting here is one, simple solution. Given all the turmoil at Twitter in early 2023, most of the tried and true solutions for scraping tweets or using their APIs no longer function. I’ve created some straightforward scripts in Python without any 3rd party modules for processing a daily file of tweets.

These are tweets where the user opted to have their phone or device record the longitude and latitude coordinates for their location, at the time of the tweet. In this post I’ll share a process for getting geo-located tweets from Twitter, using large files of tweets archived by the Internet Archive.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed